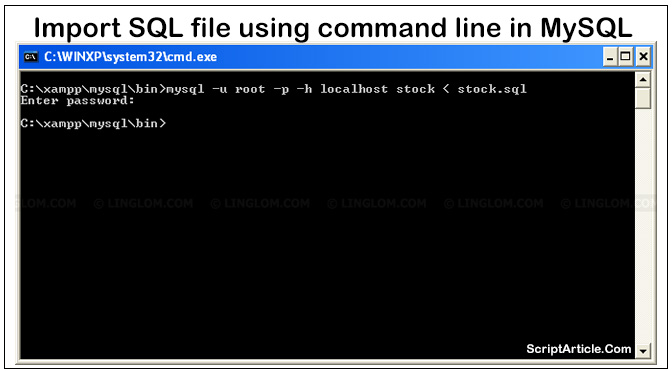

I am using this command: LOAD DATA INFILE c:/csv/bigCSV.csv IGNORE INTO TABLE table FIELDS TERMINATED BY, LINES TERMINATED BY rn IGNORE 1 LINES. The reason is that inserted records occupied space in memory for a single process and not remove. I am trying to import 300 mg csv file into mySql table. This would speedy insert instead of one go. Hey man, thanks a lot, I tried 5 different sites and solutions, but your did the trick, and now I’ve imported a 6GB mysql dump into my computer without any problems □Įxactly what I needed. You can break the sql file into several files (on basis of tables) by using shell script & then prepare a shell script to one by one to import the file. It will process a huge sql file in nugget’ size chunks, haven’t hit a sql file big enough to fault it yet. Then, we’ll insert more records into the table. First, we’ll use the sysadmin account to create a database and a table containing some records. Let’s suppose we have a user named sysadmin with access to a MySQL database. Hey, Know this is quite old but I found this epic’ly useful: Generally, our objective is to execute SQL statements for data insertion from the shell. I had forgotten how to do this and a few gig to import. Although I had larger task and believe I used Toad for MySQL ( ) to import a couple gigs of data to a remote MySQL server.Ĭan’t say it’ll work for everyone and every situation but did a bang-up job for me a few times. mysqlimport options dbname textfile1 textfile2. Most options to mysqlimport correspond directly to clauses of LOAD DATA syntax. Thanks a lot Andy, I were aware that i should increase –max_allowed_packet, but didn’t know that should use Source command instead normal mysql import. The mysqlimport client provides a command-line interface to the LOAD DATA SQL statement. I found this post on MySQL imports using SOURCE informative and give John Andrews mad props, especially for have a sweet last name. path/to/file method: >$ mysql SOURCE /Be sure to use a full path to your file, and obviously replace the path from my example with the path on your file system. Next, select your database, and use the SOURCE /path/to/file command instead of \. Ran into some fun times working with 20-50MB SQL files, so thought I’d share:įirst, login to MySQL via the command-line, and increase your max_allowed_packet size via the run-time flag: >$ mysql -max_allowed_packet=128M -u root

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed